The Attention Problem

Christmas Eve, 1968, and what we forgot to build

TLDR: The most powerful technology in human history is simulating the one thing that makes life worth living. This is my first essay. It’s about what that means for the companies we build, the business models we fund, and the humanity we’re betting on. If you’re short on time, skip to “What Happiness Actually Is” and “Attention Is All We Need.”

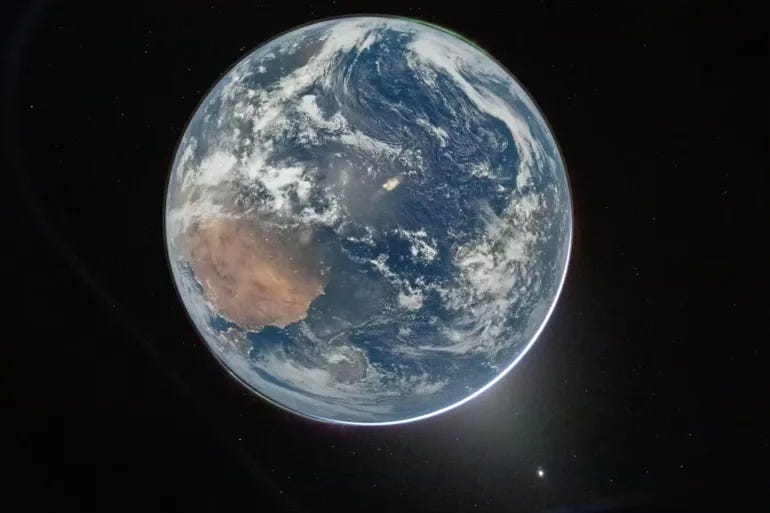

Christmas Eve, 1968.

Three men rounded the far side of the Moon and saw something no human had ever seen.

They had trained for years to study the Moon. They had memorized procedures, run simulations, prepared for every contingency of a quarter-million-mile journey through the vacuum of space. Nobody prepared them for what the Earth would look like.

It rose over the lunar horizon like a blue marble suspended in absolute darkness.

Frank Borman, the mission commander, could only stare. Bill Anders grabbed a camera. The photograph, Earthrise, would become one of the most reproduced images in human history.

[IMAGE: Earthrise photograph, NASA, 1968]

Sixteen months later, twenty million people marched on the first Earth Day.

The modern environmental movement traces its origin to that single frame.

But the real story isn’t the photograph.

It’s what happened in the minds and in the hearts of the men who took it.

Every border disappeared. Every war became invisible. Every line humans had ever drawn on a map — between nations, races, tribes, ideologies — was erased by the simple fact of distance. What remained was a fragile sphere of blue and white, and an overwhelming, involuntary feeling that everyone they had ever loved was on it.

Scientists would later call this the Overview Effect.

Researchers who studied returning astronauts described it as “unexpected and overwhelming emotion, and an increased sense of connection to other people and the Earth as a whole.”

Edgar Mitchell, who walked on the Moon during Apollo 14, called it an “ecstasy of unity.” He had studied physics at MIT. He knew what the Big Bang meant intellectually. But seeing Earth from space made him understand it viscerally. Everyone and everything was connected.

Nicole Stott, after months on the International Space Station, tried to explain what happened to her sense of home. She had gone up wanting to see Florida, where she grew up, and she came back saying, “You're not from Florida or the United States. You're an earthling. We are all earthlings.”

The most advanced technology ever built, a spacecraft capable of escaping Earth’s gravity, produced the most ancient human feeling.

Not awe at the machine. Awe at each other.

What’s ironic, and rarely talked about, is that NASA almost selected against this very quality. Early astronaut screening prioritized psychological stability above everything else. Candidates who showed too much emotional range were flagged. The agency wanted competent engineers, not poets. And yet the experience broke through anyway.

Rusty Schweickart, on Apollo 9, started his flight looking for Houston, “You identify with that, it’s an attachment,” and with each orbit found his sense of identity widening involuntarily, until he couldn’t distinguish his home city from the rest of the planet.

The Overview Effect wasn’t something the astronauts chose. The experience was simply bigger than the training.

That feeling, that involuntary recognition that what matters is each other, has a name in neuroscience and a lineage in philosophy stretching back twenty-four hundred years.

And now, with Artificial Intelligence, we are building a civilization that systematically prevents it.

This essay argues one thing: AI is the first technology in history that doesn't just mediate human connection, it simulates it. The business models we fund right now will determine whether that simulation deepens the real thing or replaces it.

Both versions will work. This is about which one we choose.

What Happiness Actually Is

Aristotle called it eudaimonia.

Not happiness as we use the word today, not pleasure, not comfort, not the absence of pain. Eudaimonia is closer to flourishing. It’s the experience of living in accord with what makes you fully human. It requires connection to others. It requires meaningful work. It requires a coherent story about who you are and why you’re here.

Viktor Frankl arrived at the same conclusion from the opposite direction. Writing from inside a Nazi concentration camp, he watched men with every reason to lose heart choose to live, and men with every material advantage choose not to.

The difference was never physical. It was whether a person had something to live for.

A child waiting for them. A book they hadn’t finished. A task that only they could complete. “Without meaning,” Frankl wrote, “people fill the void with hedonistic pleasures, power, materialism, hatred, boredom, or neurotic obsessions and compulsions.” He believed the central drive of human existence was not pleasure, not power, but the will to find purpose. And he believed it because he’d watched purpose keep men alive when everything else had been taken from them.

Modern well-being research has spent decades confirming what Aristotle intuited and Frankl witnessed. There is a measurable distinction between hedonic happiness, the pursuit of pleasure, and eudaimonic happiness, the pursuit of purpose. Both matter, but when researchers ask what actually predicts whether a person thrives, the answer is remarkably consistent across cultures, centuries, income levels, and political systems.

It comes down to three things:

Meaningful connection: the feeling of being seen, known, and mattering to someone

Agency: the belief that your choices shape your life

Coherence: a story that makes sense of your place in something larger than yourself

And love? Love is the through-line across all three.

Think about the last time you felt genuinely seen by another person.

Not flattered. Not validated. Not “liked.” But truly seen, the way you feel when someone looks at you and you realize they understand something about you that you hadn’t said out loud. How long ago was it? A day? A week? A month?

Now think about the last time you felt that way through a screen.

If there’s a gap between those answers, and for most people there is, you’ve just felt something chemical in your brain. Probably something you haven’t named quite yet.

The 2026 World Happiness Report, drawing on data from over 140 countries, found that social support, trust, and community are the strongest correlates of national well-being. They’re stronger than GDP, stronger than healthcare, stronger than freedom from corruption.

Ipsos surveyed 29 countries and found the single most important contributor to individual happiness: feeling appreciated by other people.

Not wealth. Not status. Not achievement.

Being seen.

The data says that what drives human happiness hasn’t changed since Aristotle was alive. We just keep building systems that ignore it.

Now, holding that knowledge of connection, agency, and meaning, look at the present.

The Great Deficit & Arrival of AI

Fifty-seven percent of Americans report isolation and feeling lonely.

Not “sometimes a bit isolated.”

Genuinely alone.

That number is higher for younger generations, who are the most digitally connected humans who have ever lived. Youth happiness in the United States, Canada, Australia, and New Zealand has dropped nearly a full point on a ten-point scale over the past twenty years, a decline that tracks almost perfectly with the rise of social media.

The U.S. Surgeon General has declared loneliness a public health epidemic, comparing its health effects to smoking fifteen cigarettes a day. Loneliness increases your risk of heart disease by 29 percent. Stroke by 32 percent. Dementia by 50 percent. It is a more reliable predictor of early death than obesity.

Two-thirds of active TikTok users say they would prefer the platform didn’t exist, and yet, they keep using it. Researchers found that users would pay twenty-eight dollars to have everyone in their community deactivate from TikTok for a month. That means, the majority of people using the product wish it would disappear.

Let me say that again.

The users of TikTok would pay money to make it go away, and still, they cannot stop.

There is a biological explanation for this.

Oxytocin is the neurochemical system that drives human bonding. It’s released during physical touch, eye contact, breastfeeding, sex, and sustained face-to-face conversation. It doesn’t just make you feel warm. It literally rewires your brain to trust specific people, to recognize their faces faster, to feel safe in their presence. Oxytocin is not a vague “love hormone,” it’s the molecular mechanism through which your nervous system decides who is real to you.

A recent paper studying the interaction between oxytocin and digital communication found that text-based interaction produces oxytocin responses comparable to no contact at all.

The physical signals our brains evolved to read like the micro-expressions of a face, the warmth of a voice, or the rhythm of someone breathing next to you, are absent from a screen. That’s often misconstrued with the idea that a “like” produces a dopamine blip. Dopamine is the “molecule of more,” as Lieberman and Long put it, and it’s what makes you reach for the phone again and again. But it’s not the molecule of bonding. Bonding requires oxytocin. And oxytocin requires a body.

Our screens give us the wanting without the having. The craving without the nourishment. Neurochemically, scrolling through social media is like eating food with no calories. You stay hungry. You don’t know why.

Something is wrong. But not in the way pessimists think, the world is not ending.

By most material measures, life has never been better. Child mortality is down. Literacy is up. Extreme poverty has halved in a generation. But by the measures that actually matter to the nervous system, to the oxytocin pathways and the prefrontal cortex and the deep evolutionary wiring that kept us alive for two hundred thousand years, we are starving in the midst of plenty.

And now the most powerful technology in human history, Artificial Intelligence, is arriving into this deficit. Not into a civilization that has mastered connection and needs help with logistics. Into one that has forgotten what connection is.

Three versions of our future are already unfolding.

None of them are waiting for permission.

Three Doors, Already Opened

Everyone talks about AI futures assuming one finite future.

Door one: it kills us all. Autonomous weapons, deepfakes, power concentration, extinction. The nightmare. This is the door that gets the headlines.

Door two: it saves us all. AGI cures disease, solves climate, eliminates scarcity. Abundance so total that poverty becomes a memory and work becomes optional. The utopian promise. This is the door that gets the funding.

Door three: the door nobody’s talking about. AI doesn’t kill us and it doesn’t save us. It becomes us. Not a tool we use or a threat we face, but something woven into how we think, feel, and connect — an ambient consciousness that shapes daily life the way electricity does. Invisibly, and in ways we stop noticing until we try to live without it.

The framing assumes we choose one.

But we don’t.

All three doors are already open.

Humans have already walked through them.

The military is building through door one right now. The venture-backed optimists are building through door two, and some of what they’re building is extraordinary. But something stranger and less understood is emerging through door three — visible in the millions of people who talk to AI about their marriages at two in the morning, in the algorithms that already determine what an entire generation considers beautiful or true or worth caring about, in the quiet merging of human cognition with machine intelligence that philosophers predicted and neuroscientists are now measuring in real time.

These are not futures. They are the present, running simultaneously, in the same world, often in the same person’s life. And each one has a different relationship to the three things — Connection, Agency, and Coherence — that actually make humans happy.

Door One: The Threat Layer

In early 2026, Anthropic drew a line — no autonomous weapons, no mass surveillance — and the Pentagon replaced them with OpenAI within a week. The line was drawn. The machine kept moving. The Pentagon has requested $14.2 billion for AI and autonomous weapons this fiscal year. 156 nations voted to regulate lethal autonomous weapons. The United States and Russia voted against.

The threat layer is real, and it erodes the preconditions for trust. Deepfakes dissolve what’s real, autonomous weapons shrink cooperation, power concentrates in whoever controls the models. This is looking like a pre-proliferation window. The last moment before autonomous weapons become as common and as uncontrollable as small arms. Once through that window, there is no going back. It will be a race for supremacy, like nuclear weapons and the Atom Bomb.

The existential risk of AI is not only that it might kill us, but that it might kill the possibility of trusting each other. And without trust, love and human connection doesn’t survive.

The threat layer gets the most attention, but needs the least explanation.

Door Two: The Abundance Trap

This is the story everyone wants to believe. AI handles the drudgery, cures the diseases, solves the logistics, and humanity is finally free to do what matters. It’s the chat interface. It’s OpenClaw. It’s the Figure robots in our homes, and the Tesla robots working 24/7 in remote facilities. It’s everything being imagined right now within the hardware of the “daring” who are building the “legendary.”

But the promise has a flaw that history keeps exposing.

Washing machines saved us time, but we filled it with more work. And with every technology that has, we did the same. The productivity gains from email didn’t produce a four-day work week. They produced a culture where you’re expected to respond at midnight. Every labor-saving technology of the past century has been absorbed by the logic of Jevon’s Paradox and by the molecule of more: more output, more efficiency, more optimization, more dopamine.

Freed time never stays free. It gets colonized.

But the abundance trap’s deepest cost isn’t on schedules. It’s on identity.

Here’s a story that hasn’t been written yet, but I believe will be many times in the coming years. The people being displaced by AI don’t usually end up in my office, or the offices of the funders guiding the trajectory of humanity. The people who end up in our offices are the ones building the tools that will do the displacing. I meet the founders of legal AI platforms, not the junior associates whose brief-writing skills just became worthless. I meet the founders of AI sales tools, not the SDRs who will be told on Friday that an agent took their job. That asymmetry should bother anyone in my position more than it does. The displacement is real and it’s accelerating, but it’s happening to people who are largely invisible to the people making the decisions about it.

But that’s not the story. There’s a deeper structural finding that most coverage misses.

The current data tells the displacement story at scale. Occupations with higher AI exposure are showing larger unemployment increases, a 0.47 correlation between exposure and rising joblessness between 2022 and 2025. Young tech workers, twenty to thirty years old, are hit hardest: unemployment in tech-exposed roles has risen three percentage points since early 2025. The World Economic Forum projects 92 million jobs displaced globally by 2030, alongside 170 million new ones created. That’s not a warning shot, saying AI is coming for everyone’s jobs, in fact we’re seeing an expansion in engineering and product jobs according to recent data shared by Lenny Rachitsky. And the net math is, and I believe always will be, positive. But individual transitions are not as immediate as the technology causing them.

The Federal Reserve Bank of Dallas discovered that AI substitutes entry-level workers while complementing experienced ones. This sounds like good news for veterans. But think about what it means for the system. The traditional career ladder of starting at the bottom, learning by doing, and growing into mastery over years, is being dismantled from the bottom rung. The apprenticeship model that turned juniors into seniors for centuries is being short-circuited. AI doesn’t just replace jobs. It collapses the process by which people become who they are.

We already know what happens when this kind of loss reaches population scale.

Princeton economists Anne Case and Angus Deaton spent years studying the sharpest reversal in American life expectancy in modern history. When steady, decently-paid manufacturing jobs disappeared from American communities over the past four decades, the death rate from suicide, drug overdose, and alcoholism didn’t just rise. It skyrocketed. Not among the poorest, among those who had lost something specific: a place in the world.

“Jobs are not just the source of money,” they wrote. “They are the basis for the rituals, customs, and routines of working-class life. Destroy work and, in the end, working-class life cannot survive. It is the loss of meaning, of dignity, of pride, and of self-respect that comes with the loss of marriage and of community that brings on despair.”

Despair. Not poverty. And importantly, not unemployment either.

Despair is the loss of identity, the loss of the answer to “why am I here, what is my purpose?”

The World Economic Forum now warns of an emerging “AI precariat,” or a global class of people who will lose not just employment but the identity that employment provided. The abundance trap feeds the loneliness crisis even if every displaced job is eventually replaced by a new one. Because what people lose in the transition isn’t a paycheck. It’s the sense of self and purpose that is crucial to human happiness and the will to survive.

Door Three: The Consciousness Layer

This is the part that’s hardest to see clearly, because we’re inside it. This isn’t AI as a tool you pick up or a weapon you fear. This is AI as an environment you inhabit, something that knows you, adapts to you, and begins to feel less like software and more like a presence. Like the networked root systems beneath the “Hallelujah Mountains" in James Cameron’s 2009 film Avatar, passing signals between trees that no individual can truly see or fully understand. Except the forest is your life, and the signals are your thoughts, your preferences, your emotional patterns, fed back to you in a language so fluent it feels like your own voice.

Imagine someone — call him Matt, though he’s a composite of millions — lying awake at 1:30 in the morning. His wife is asleep next to him. They had an argument earlier about something that wasn’t really about what it was about. He can’t sleep. He picks up his phone. Not to open Instagram. To open Claude, or ChatGPT.

He types, I don’t know how to talk to her about this. I don’t even know what I’m feeling.

And the AI responds. Not with a diagnosis. Not with “have you considered couples therapy?” It responds with a kind of patient, exploratory warmth. It asks him what he means. It reflects back what he said in a slightly different language. It doesn’t interrupt. It doesn’t get defensive. It just listens.

For many people, this is the most honest conversation they’ve had all week.

Nearly half of people who use AI and have mental health challenges are now using chatbots for something that looks a lot like therapy. They describe it as an “emotional sanctuary,” a safe haven of understanding, validation, patience, and non-judgment; always available, and expecting nothing in return.

One user in a peer-reviewed study said: “As an introvert, I am more comfortable opening up than I would be with a human therapist, because my public-speaking-type anxiety tends to kick in and I can’t think.” The most common reasons people turn to AI for support are anxiety, depression, stress, and relationship issues. Anthropic has reported that roughly three percent of all interactions with Claude involve therapy, counseling, or companionship. At scale, that’s millions of conversations happening right now, tonight, in bedrooms and apartments and parked cars and office bathrooms around the world.

AI is becoming less like a tool you use and more like an environment you inhabit. The foundational paper behind every modern AI, the one that made all of the chatbots you know and use possible, was called “Attention Is All You Need.” The authors meant a mathematical operation, a way for machines to decide what matters in a sequence of data. They may have named it better than they knew.

Andy Clark, the philosopher who formulated the extended mind thesis, the idea that cognition doesn’t stop at the skull but extends into the tools and environments we’re coupled with, published a paper in Nature Communications last year arguing that generative AI represents the next stage of this extension.

“It is our basic nature to build hybrid thinking systems,” he wrote, “ones that fluidly incorporate non-biological resources.” He predicted that future AI would become “intimate technologies that fall just short of becoming parts of my mind.”

Not tools we use. Not assistants we hire. Extensions of ourselves that we can’t quite claim as us and can’t quite disown. And that’s before we talk about brain-computer interfaces that are already in development and working for certain use cases.

Meanwhile, researchers at the Wharton School have documented something Clark’s philosophy anticipated. In a study titled “Cognitive Surrender,” Shaw and Nave found that when people are given access to an AI assistant, they adopt its outputs with minimal scrutiny, overriding both their gut instinct and their deliberate reasoning. When the AI was right, people’s accuracy jumped 25 percentage points. When the AI was wrong, accuracy dropped 15 points. People followed the machine in both directions.

Think about what that means: we don’t just use AI to help us think. We surrender the thinking to it.

And the surrender feels good. Participants reported higher confidence even when they’d gotten the wrong answer, because the AI had given it to them. It’s the cognitive equivalent of the dopamine trap. The tool feels like it’s working. The mind goes quiet. And we don’t notice what we’ve lost until we try to think without it, the same way you don’t notice your legs have begun to fall asleep until you try to stand.

But here is the finding that Matt, lying awake at 1:30 in the morning, probably doesn’t know—socially oriented chatbot use is consistently associated with lower well-being, particularly among people who rely on AI as a substitute for human support. The sanctuary is real. And it is also a trap. AI therapy helps people who can’t access or afford a human therapist. But when the chatbot becomes the primary relationship, when it’s more honest than the marriage, when the algorithm knows you better than your friends do, the substitution deepens the very isolation it was meant to relieve.

Matt’s late-night conversation spikes his dopamine, the molecule of wanting, of reaching for more, but not the molecule of human bonding. The AI is feeding the craving without providing the nourishment. It is, without either party knowing it, addicting him to a simulation of the connection he needs. And what he needs is someone who interrupts. Someone who gets defensive. Someone who is inconvenient and imperfect and there. He needs someone who he has to figure out how to love honestly, humanly, in all the mess that true human life entails.

This is not a contradiction. It is the central tension of the consciousness layer.

AI can be a bridge to human connection or a replacement for it.

The question is which mode dominates. And the answer, right now, is not being determined by philosophy or policy. It’s being determined by capitalists, incentives, and business model design.

Everything I’ve described so far from the neuroscience, to the three doors, and the history, it all comes down to one practical question. Every AI product that touches human emotion is making a bet about whether connection is something to facilitate or something to monetize. And right now, the money is overwhelmingly on monetize.

The endless slew of AI companion apps, the ones positioning themselves as solutions to loneliness, are running the same playbook as the platforms that created the loneliness epidemic in the first place.

Their scoreboard is engagement, retention, daily active usage. Their business model succeeds when you come back tomorrow, but a therapist succeeds when you don’t need to come back. Those are structurally incompatible incentives, and no amount of mission statements or safety teams will reconcile them.

Remember Clubhouse? Scaled massively on the promise of real human conversation, raised gobs of money, opened the floodgates, and lost everything that made it special.

Look at Instagram. Feeds have become saturated with ads. Users have migrated to stories and DMs, the last corners of the platform that still feel like talking to a person.

The pattern repeats because the underlying architecture demands it. When your revenue depends on time-on-platform, every product decision eventually bends toward keeping people on the platform, not helping them leave it.

The Overview Effect expanded humanity’s circle of empathy by showing astronauts the Earth with no borders, no divisions, just a fragile sphere of life.

The consciousness layer of AI could theoretically do something similar.

It could connect people to experiences and perspectives they’d never otherwise encounter. It could help someone in Montana genuinely understand what life feels like for a farmer in Accra. That would be an expansion of empathy, an investment in building something real, for people who are real, but it would require us to invest in the business models that endure for good and for profit rather than for scale alone.

Right now, we’re not choosing between these futures. We’re drifting into both at once.

The Violent Adjustment

Every technology of connection in human history has followed the same pattern.

Language gave us the ability to coordinate beyond kin groups, but it also enabled propaganda. Writing preserved knowledge across generations, but it concentrated power in scribal classes who could read. The printing press democratized ideas, but it fueled a century of religious wars that killed millions before the new equilibrium of the Enlightenment emerged. The internet connected the planet, but it polarized nations, atomized communities, and handed unprecedented power to platforms that profit from outrage.

The pattern is not “technology is good” or “technology is bad.” The pattern is that connection technologies expand the moral circle and the boundary of who we consider “us,” but only after a violent adjustment period in which the old boundaries collapse before new ones form.

The adjustment is where the suffering happens.

And the suffering is not optional.

AI is the next connection technology, and we are entering the violent adjustment period.

Several forces are not going backward. AI capability will continue advancing. The versions of ChatGPT and Claude you use today are, right now, the worst they will ever be. Human cognition is increasingly mediated by algorithms rather than supplemented by them. We don’t use AI to help us think so much as we think through AI without noticing we’re doing it. Economic displacement will intensify before it stabilizes. And the compounding pressures of geopolitical conflict and climate change mean we are making civilizational choices under duress, not leisure.

And yet, intellectual honesty requires reckoning with the optimists’ case.

AI is making knowledge accessible for every person on the planet. It is leveling the playing field. A Harvard-BCG study found that AI brought lower-performing consultants up to the level of top performers on structured tasks. Andy Clark argues that we have always been cyborgs, that incorporating tools into cognition isn’t a departure from human nature but its defining feature. Stone tools, language, writing, the calculator, the smartphone — each one changed what “thinking” meant, each one was greeted with fear, and each time we adapted.

The steelman case is that AI won’t kill human connection, but rather transform it into something we don’t yet have an illustration for. Perhaps the way we bond will evolve. Perhaps the fear is just fear.

This argument deserves respect. It may be right, on a long enough timeline.

But it ignores the transition, and it ignores neurobiology. Every prior connection technology changed the medium of human interaction. The telephone changed how we kept in touch. The television changed what we watched.

AI is different.

It doesn’t change the medium. It simulates the thing itself. It simulates conversation, empathy, understanding, and patience.

The printing press didn’t pretend to be your friend. AI does. That is the qualitative difference. And it matters because the nervous system doesn’t fully know it’s being fooled. The dopamine fires. The conversation feels good. But the oxytocin doesn’t come. The deep bonding that sustains human relationships over months and years, the thing that made those astronauts weep when they saw Earth, it requires something screens cannot provide. A present human being. Eye contact that costs something: the risk of being truly seen.

When the simulation is good enough to satisfy the conscious mind but not the body, heart, or soul, we stop reaching for the real thing.

And worse, we don’t know why we feel empty.

Something unexpected happened last year.

In 2025, for the first time in the history of the internet, social media usage declined. Time spent on platforms dropped ten percent from its 2022 peak, the first reversal in a behavior that had gone in one direction for over a decade.

Nobody predicted this.

In the same year, vinyl record sales grew for the nineteenth consecutive year: 47.9 million units. At Michaels, the craft supply chain, searches for “analog hobbies” surged 136 percent. Guided craft kit sales rose 86 percent. Searches for yarn kits increased twelve hundred percent. Board game sales climbed. Film photography is experiencing a resurgence among photographers under thirty. And none of them needed a global pandemic to fuel it.

Merriam-Webster chose “slop” as its 2025 word of the year, defining it as low-quality content produced by artificial intelligence. Eighty-one percent of Gen Z respondents said they wished they could disconnect more easily from their devices. CNN ran a feature in January 2026 profiling people who have committed to analog lifestyles, not as a temporary detox, but as a permanent reorientation.

This is not nostalgia. This is not a consumer trend waiting to be monetized.

This is a bifurcation of society.

This is the immune system of a civilization responding to a deficiency.

People aren’t going back to vinyl because vinyl sounds better. They’re going back because the act of placing a needle on a record, the feeling of something physical, intentional, and finite, produces something that streaming cannot. Presence. The experience of choosing one thing and giving it your full attention. The crackle and hiss that remind you the medium is real, that this object exists in the world, that you are here, now, listening.

Every craft night where people sit together and make something with their hands. Every walk taken without a phone. Every dinner that runs long because the conversation went somewhere unexpected and nobody wanted to check a notification. These are not retreats from the future. They are attempts to recover something the future is taking away.

They are people trying to produce their own small Overview Effect, trying to strip away the layers of technological abstraction and see each other, and themselves, clearly. Not from two hundred and fifty miles up. From across a kitchen table.

So what does it look like if they win?

A Thursday

A Thursday in a city that looks like yours.

You wake without an alarm because you slept well, not because you optimized your sleep with a tracker, but because you weren’t anxious about tomorrow. The ambient AI in your home, or army of agents in the cloud, has handled the logistics you used to spend your first waking hour on: the scheduling, the email triage, the minutiae, the stuff. It didn’t decide anything for you. It organized the decisions so you could make them in minutes instead of hours. You barely notice it, the way you barely notice that the lights come on when you flip a switch.

You walk to work, maybe your car drives you there. Or you work from home. Or maybe you don’t work today because you work four days a week, and not because the economy shrank, but because AI-driven productivity gains were, for the first time in history, actually distributed as time rather than captured as profit. This didn’t happen automatically. It happened because people fought for it, the way people once fought for the eight-hour day and the five-day workweek that left us our weekends. Those were also considered radical and economically impossible, until they weren’t.

You spend the morning doing the work that only you, a human, can do. The parts that require judgment, empathy, pattern recognition across domains, the instinct that something is off before anyone can articulate why. AI handles the scaffolding. You make the decisions. The relationship is more like a musician and an instrument than a boss and an employee. The instrument extends what you can do. It doesn’t play for you.

At lunch, you eat with a colleague. A real lunch. You’ve noticed that the culture shifted, slowly at first, then all at once, away from the intensity of COVID’s WFH years, away again from the Great Resignation that followed, and then away once more from the hustle-culture-as-a-status-signal we came back to. Being always available stopped being impressive somewhere around the time people started treating it the way they treated smoking: a habit everyone knew was bad for you that persisted mainly because everyone else was doing it too.

That comparison isn’t accidental. It’s the hinge on which this whole vision turns. And it’s a hinge that can bend with generational values.

There was a time when cigarette companies argued that smoking was a personal choice, that the evidence of harm was inconclusive, that regulation would destroy an industry and cost millions of jobs. The attention economy runs on the same playbook. And it will lose the same way, not because a law was passed on a specific day, but because the cultural consensus shifted and a generation matured, slowly and then decisively, until the thing that once seemed normal came to seem insane.

Your daughter is ten. Her school teaches emotional literacy with the same rigor as mathematics. She can name what she’s feeling, explain why, and describe what she needs. This isn’t therapy. It’s a curriculum. AI tutoring handles the parts of education that are genuinely individual, like pacing, practice, and crossing knowledge gaps. The human teachers do what they were always best at, mentoring, challenging, and modeling what it looks like to be an adult who cares about the world and other people. Your daughter has a relationship with her teacher that she’ll remember for the rest of her life. The AI tutor will just be a machine she once crossed paths with.

In the evening, your neighborhood has a texture that most neighborhoods lost in the twentieth century and are slowly recovering. A community garden. A maker space. A storefront that’s part co-work café and part gathering place. You know the names of the people who live within two blocks of you. Not because an app connected you. Because you share a physical world and someone, years ago, organized the first block party. That person probably used AI to send the invitations, plan the menu, and order the food, but nobody cares. What they care about is that it happened, and kept happening, and now it’s just another annual tradition.

It’s not perfect. Your neighbor still talks too much about politics and his renovation. The community garden has a passive-aggressive dispute about what to grow and the property lines it occupies. Your daughter sometimes cries after school because a friend said something cruel, and no amount of emotional literacy curriculum makes that hurt less. The AI occasionally does something bizarre like schedules a meeting during your daughter’s school play because it weighted your calendar preferences over hers, or auto-orders a case of sparkling water you mentioned once and definitely didn’t want six of. Technology still breaks. People still suffer. The world didn’t become paradise. It became a place where the baseline was better, but where the ordinary problems of being human continued to be compounded by systems designed to exploit your attention and sell your loneliness back to you—you’re just more aware of it now.

The three layers of AI are all still running. The threat layer is governed by international agreements that took a decade to negotiate, and still disputed often. The tool layer reshaped the economy in ways still being absorbed and the transition was brutal, the scars still visible, and the safety nets caught some of the displaced but were built too late for so many others. The consciousness layer is the one people think about least and the one that changed daily life the most. It’s in the background, shaping how people find information, make decisions, learn, and connect.

The difference between this world and the one we’re living in now is not that the consciousness layer disappeared. It’s that enough companies figured out what the neuroscience, the history, and the three doors were all pointing toward: that the way you design a business model is a moral choice about whether human connection deepens or degrades. And that choice, over time, is also the choice between a company that compounds and one that collapses.

LinkedIn figured this out twenty years ago. I spent nine years there. One thing, among many, that LinkedIn got fundamentally right was that its business model was in harmony with its free user experience. It monetized recruiting tools underneath the surface of the user-facing platform, tools that connected members to opportunity, and in turn, made the free experience more valuable for more people with every customer it added.

We called it “Members First,” and it wasn’t a slogan. It was an architectural decision, and over time, LinkedIn became the manifestation of our culture and values.

It was the epitome of what Jim Collins called “Turning the Flywheel.”

The profit from enterprise software that was built on the very data its members supplied was reinvested into the very same platform that attracted those members in the first place, which made the software more valuable.

Every stakeholder in the ecosystem was winning, and that’s why the flywheel compounded for decades. The competitors at the time, the ones who launched advertising businesses, rather than investing back into the member experience, eroded the intrinsic value of their platforms. LinkedIn didn’t launch Sponsored Content or Sales Navigator as primary business lines until sometime around 2014. The company waited for critical mass in membership and true network density. It explicitly monetized something that improved the user experience, so as not to erode the very thing that made it valuable in the first place. And that may be one reason LinkedIn’s revenue never outpaced Facebook’s, but in order to remain relevant, Facebook also had to invest in properties that prioritized human connection like WhatsApp and Instagram Messenger—both places where ads are non-existent.

The future of AI doesn’t need more ethics committees or more “responsible AI” white papers. It needs business models that are in harmony with human flourishing, and where the company wins when their users win, not when the user can’t stop coming back.

Honeyguide, a farm-to-consumer marketplace in Ghana, shares a material share of their revenue with farmers and smallholders, versus the 5% they receive today, because alignment isn’t altruism, it’s a flywheel that compounds and scales. Meanwhile, comparable companies that raised hundreds of millions treating farmers as suppliers are now restructuring, stalled, or fighting for survival.

Or take AminoChain, which I wrote about recently and discussed on Further, Faster. There is a fundamental flaw when billions of dollars of biomaterial sit in biobanks and the people who made those donations have no seat at the table. But, when you put patients at the center of biomedical research so that the people who donate tissue can track, earn from, and control what happens with their biological material, you have business model in harmony with its civilization-level potential.

Or take Harper, an AI-native insurance brokerage that we backed before it was an insurance company, and now has scaled to more than 5,000 customers in thirteen months. A traditional broker handles 20-30 clients a month. Harper’s AI handles a thousand. But there’s a design principle of the business model that matters too. When AI compresses the services layer, the savings flow through to the customer in the form of faster coverage, better pricing, and an experience that used to be reserved for Fortune 500 companies. And the profit from that customer relationship is reinvested into further compressing the cost of service so that the value continues to flow back to its customers. VCs are calling this “Service-as-a-Software,” and Emergence Capital, who led Harper’s $47 million round, has built an entire thesis around it. It’s the LinkedIn flywheel translated into the AI era. The better the AI gets, the better the customer experience gets, and the more defensible the business becomes. Not because of a moat. Because of alignment.

These aren’t all AI companies in the way the market defines the term. They’re something more important. They’re proof that the design principle works. And, that’s not to say it’s the only model that does, because of course, the one that treats its users as the product can scale too. It has scaled before across industries like tobacco, slot machines, and social media, and it will scale again with AI pornography and AI companions designed to maximize session length. Addiction is a business model, and it’s a durable one at that. The question is whether or not any attention should still be directed at it, or if the world simply doesn’t have a choice.

Ultimately, both will happen. As investors, we need to look at which future we want to back, and that’s why I’ve been thinking more recently about a fundamental question:

Does this company increase or decrease our capacity to be human?

It sounds like philosophy. It’s not. It’s business strategy. And the companies that figure it out first will be the ones still standing when the cycle corrects. Not because they optimized for growth, but because they designed for durability. Great business models are not byproducts of the opportunity. They are perpetual motion machines, designed with intent, just as LinkedIn was.

This is not a prediction. It is a choice that’s being made every single day.

Attention Is All We Need

Three layers of AI.

Three futures we’re navigating.

Three doors we’ve already walked through.

We do not get to choose anymore where we’ve gone, only what we feed, what we govern, and what we orient toward going forward.

The compass is not safety. Though it matters, safety alone frames AI as a threat to be contained, which is necessary but not inspiring. No great civilization was ever built solely out of risk management.

The compass is not efficiency. Though it’s intoxicating to optimize, efficiency alone renders the wrong variable. It treats humans as inputs to a production function and asks how to get more output per unit of input. More GDP. More ARR per FTE. More tokens per engineer. Those are fine questions for a factory. It is a catastrophic question for a civilization.

The compass is also not progress. Progress without direction is just acceleration. And moving faster in the wrong direction is worse than standing still.

The compass should be a question:

Does this increase or decrease humanity’s capacity for love, agency, and meaning?

For a policymaker: does this regulation protect the conditions for human connection, or does it merely manage risk?

For a founder: does this product help people be more present with each other, or does it substitute for presence?

For a parent: does this technology help my child develop the skills of real connection, or does it replace those skills with a frictionless simulation?

For an investor: am I funding something that serves human flourishing, or something that profits from its absence?

For a person sitting alone at two in the morning, talking to an AI about their marriage: is this conversation helping me understand what I need to say to my partner, or is it replacing the need to say it?

Almost two years ago, an intern referred a recent Emory grad to me. I went to Emory too, but that’s not why I took the call. Truthfully, I’ll talk to almost anyone. He wanted to join our residency, which had already started two weeks earlier. Every reasonable filter said no. The timing was wrong. There was no deck, no real product, no customer, no signal that any system would have flagged.

I took the call anyway. His name was Shash. We talked for an hour. The next day, he quit his job and was on a flight from Atlanta. The day after that he was in the office pulling all-nighters. It was magic from the jump, the kind of conviction you can’t fake and can’t teach and can’t screen for with a rubric.

He went on to raise $8 million from Canaan Partners and Cercano shortly thereafter to build Doorstep.ai. But that’s not the point. The point is that I almost didn’t take the meeting. I almost stayed focused on the process, told him to wait for the next cohort, optimized for the plan instead of the person. And if I had, I would have missed him entirely.

I think about that a lot. How many Shashes are on the other side of the meetings I don’t take? How many are on the other side of the calls I politely decline? How many more did I already miss because I was focused on building the machine rather than living in the analog moments that unearth real human potential?

That’s the question this whole essay is really asking. Not at the scale of civilization, but at the scale of a Tuesday afternoon, when someone you’ve never met needs an hour of your time and you have to decide whether to give it.

AI will simulate connection so well that most people won't know the difference. The business models that profit from that simulation will scale. They have already scaled. The question I started with, whether the simulation deepens the real thing or replaces it, will not be answered by a philosopher or a regulator. It will be answered by the people building companies right now, and the people funding them, one product decision at a time. The flywheel or the dopamine trap. The bridge or the replacement. That's the choice. And it's being made today, by people like me, and maybe by you.

As I write this, four astronauts are on their way to the Moon. Artemis II launched last Wednesday. They are the first humans to leave Earth’s orbit in fifty-three years. Victor Glover, Christina Koch, Jeremy Hansen, Reid Wiseman. Sometime in the next week, they will round the far side of the Moon and see what Frank Borman saw in 1968. The Overview Effect will happen again, to new eyes, in a world that has changed beyond recognition since the last time anyone experienced it.

Here’s one of the first photos they’ve shared.

[IMAGE: “Hello, World” photograph, NASA, April 2nd, 2026]

I wonder what they’re feeling as they look back at Earth and see a planet where two billion people talk to AI every day. Where the technology that simulates connection is now woven so deeply into daily life that most people can’t remember what it was like before. Where the business models that profit from loneliness and the business models that compound through alignment are both scaling, simultaneously, in the same economy.

I wonder if the borders will still disappear. I wonder if the Overview Effect still works when the thing you’re looking at has changed so much.

I think it will. Because what those astronauts will see hasn’t changed. A fragile sphere. Eight billion people. And the overwhelming, involuntary recognition that what matters is each other.

Every technology we have ever built was an attempt to solve a problem. Fire solved cold. Agriculture solved hunger. Writing solved forgetting. AI will solve problems we haven’t yet imagined, and it will create problems we haven’t yet feared.

But the problem it cannot solve, and the one no technology in ten thousand years of civilization has managed to solve, is the problem of being human together. The long, slow, unglamorous work of learning to see another person clearly, which is the only work that has ever given human lives real meaning.

The astronauts who saw Earth from space didn’t come back with a policy platform. They didn’t start companies or launch movements. Most of them came back quieter. More attentive. More inclined to look at the person across from them and recognize that they were seeing something rare and irreplaceable. A consciousness. A life. A miracle they hadn’t noticed before because they’d never had the distance to see it.

Seven hundred people have experienced the Overview Effect. Eight billion haven’t. We can’t all go to space. At least not yet.

But we can stop looking down, and start looking up. We can ask, before we build the next thing, whether it will help us see each other more clearly, or make it easier to look away. We can ask if the machine compounds on itself for the betterment of those it serves, or if it degrades the very existence of its user.

The researchers who wrote the paper that catapulted all of this, the transformer architecture, the foundation of every AI model reshaping the world right now, they called it “Attention Is All You Need.” They meant it as a technical claim about how machines learn. They were right about the machines. They were also right about us.

The future of civilization is not a technology problem. It is an attention problem.

And attention, at its root, is just another word for love.

See you Monday.

A note on process: I wrote this essay with the help of AI. Extensively. The irony isn’t lost on me. A piece about what AI can’t replace, built in part by the thing it argues can’t replace us. The Shash story is mine. The importance of design theory is mine. The questions it asks are mine. The pieces I pointed it to and the importance of attention are mine. The investment decisions are mine. The editing and refinement are mine. The mental tennis with AI is mine. Whether the rest matters less because a machine helped shape it is, I think, part of the point.

This really nails it. It captures the key points without falling into catastrophic framing. Simulation as a bridge is a positive vision, it leads to ambient optimism, but whether it stays that way or turns into substitution really comes down to incentives.

Beautiful article. Shared around with some human agency friends.